March 3, 2026

12 min read

Satya Nadella just said the quiet part out loud.

In a recent interview on the OMR Podcast, the CEO of Microsoft — the company that bet $13 billion on OpenAI, that's building data centers on every continent — made an admission that should stop every AI investor in their tracks:

"I always say that we are one sort of innovation away from the entire regime changing."

Read that again. The man running the most AI-invested company on Earth is telling you the ground could shift under all of it. Tomorrow. Without warning.

And he's right.

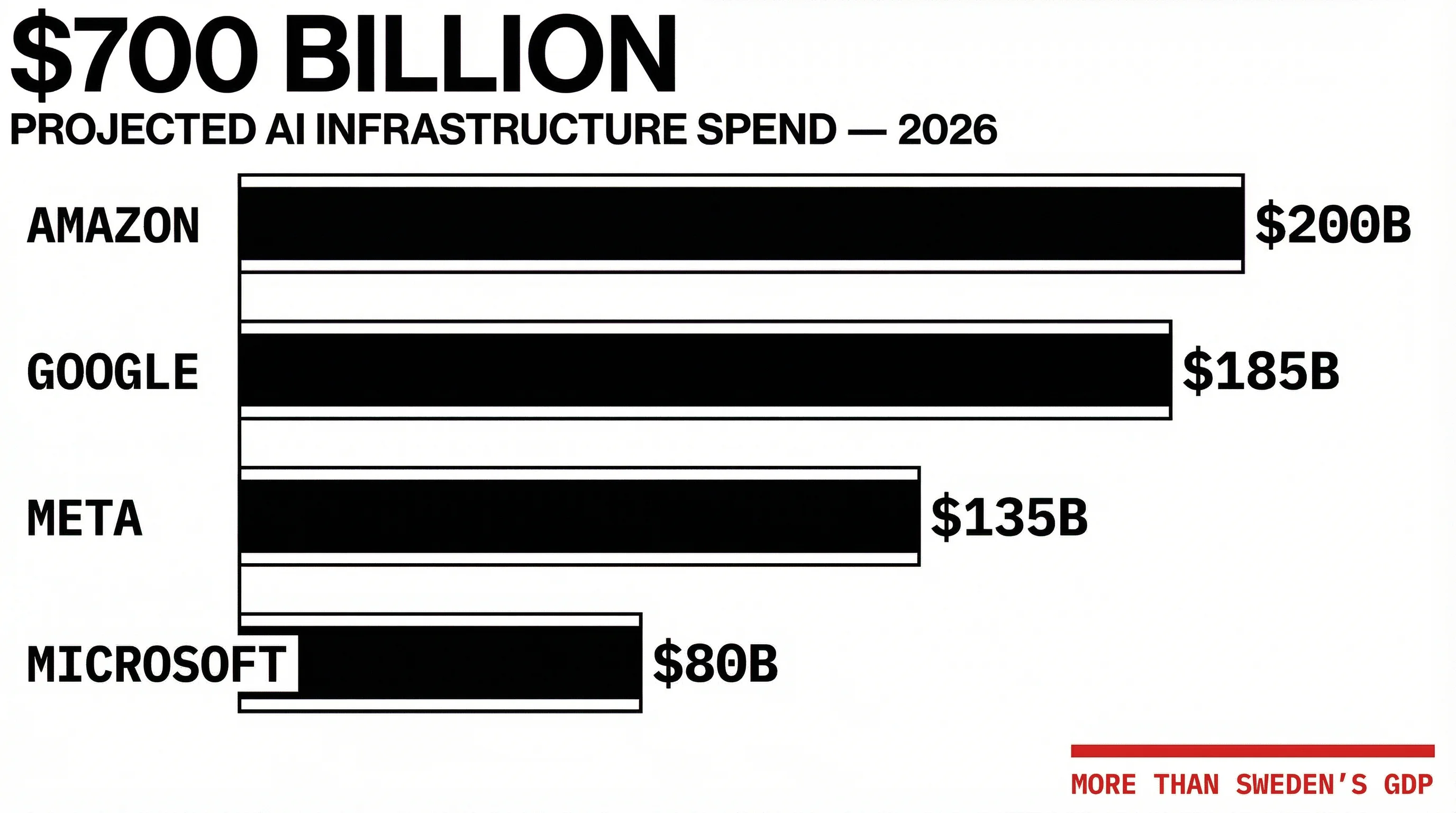

The $700 Billion Play

Right now, every major player in AI is running the same play. More data. More GPUs. Bigger clusters. Same architecture. They've convinced themselves that scale is destiny. That the biggest balance sheet wins. That this is a resource war.

The numbers are staggering. According to Fortune, the four largest hyperscalers — Amazon, Google, Meta, and Microsoft — are on track to spend a combined $630 billion to $700 billion on AI infrastructure in 2026 alone. Amazon leads with a projected $200 billion, Google follows at $185 billion, Meta at up to $135 billion. Moody's has flagged $662 billion in off-balance-sheet data center lease commitments. Jensen Huang calls it "just the start."

This is more than the GDP of Sweden. More than the GDP of Israel. More capital concentrated in a single bet than any industry has ever made in history.

And Nadella just told you it might not matter.

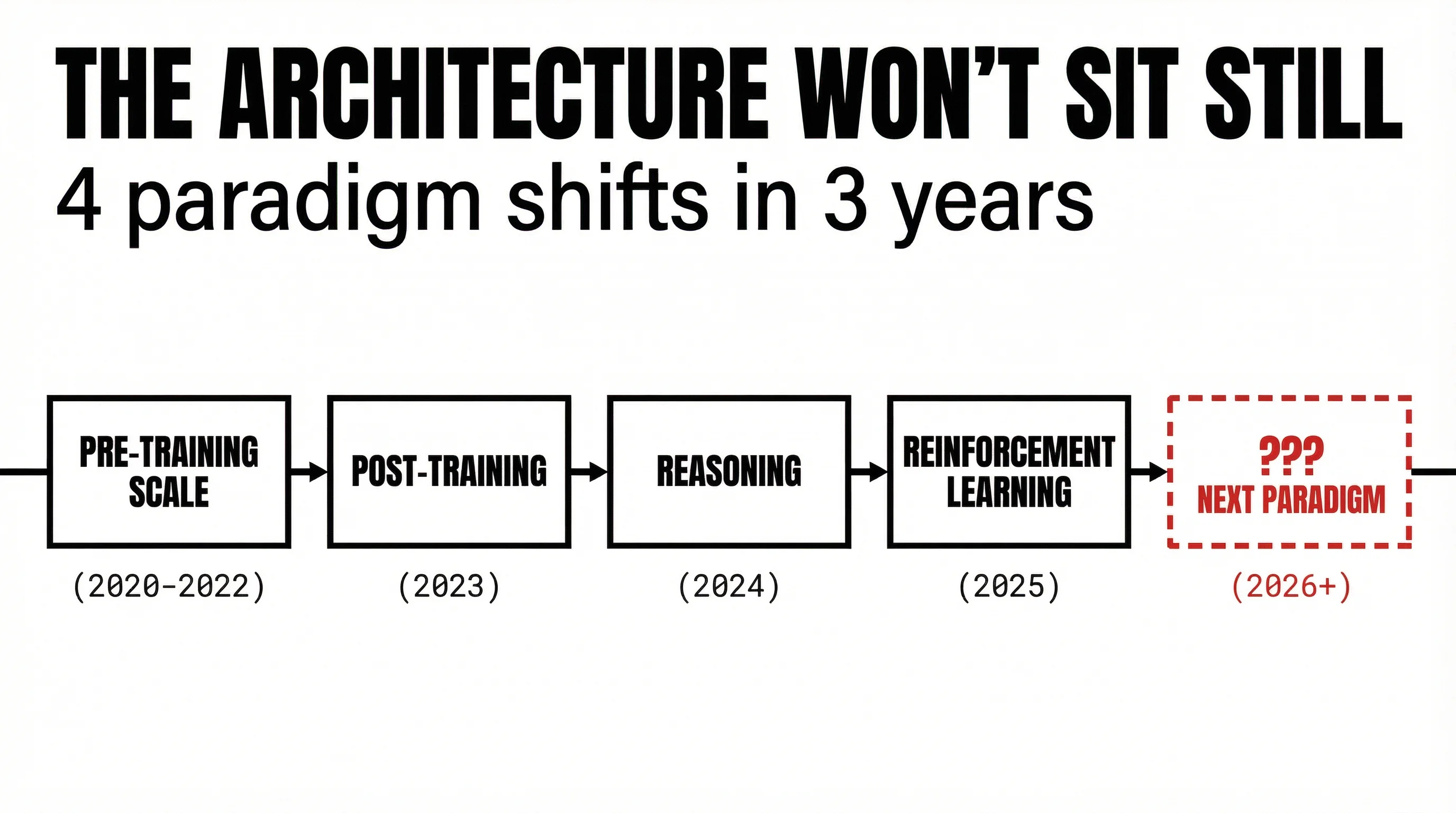

The Architecture That Won't Sit Still

Here's why. In the same interview, Nadella laid out the instability that everyone is choosing to ignore:

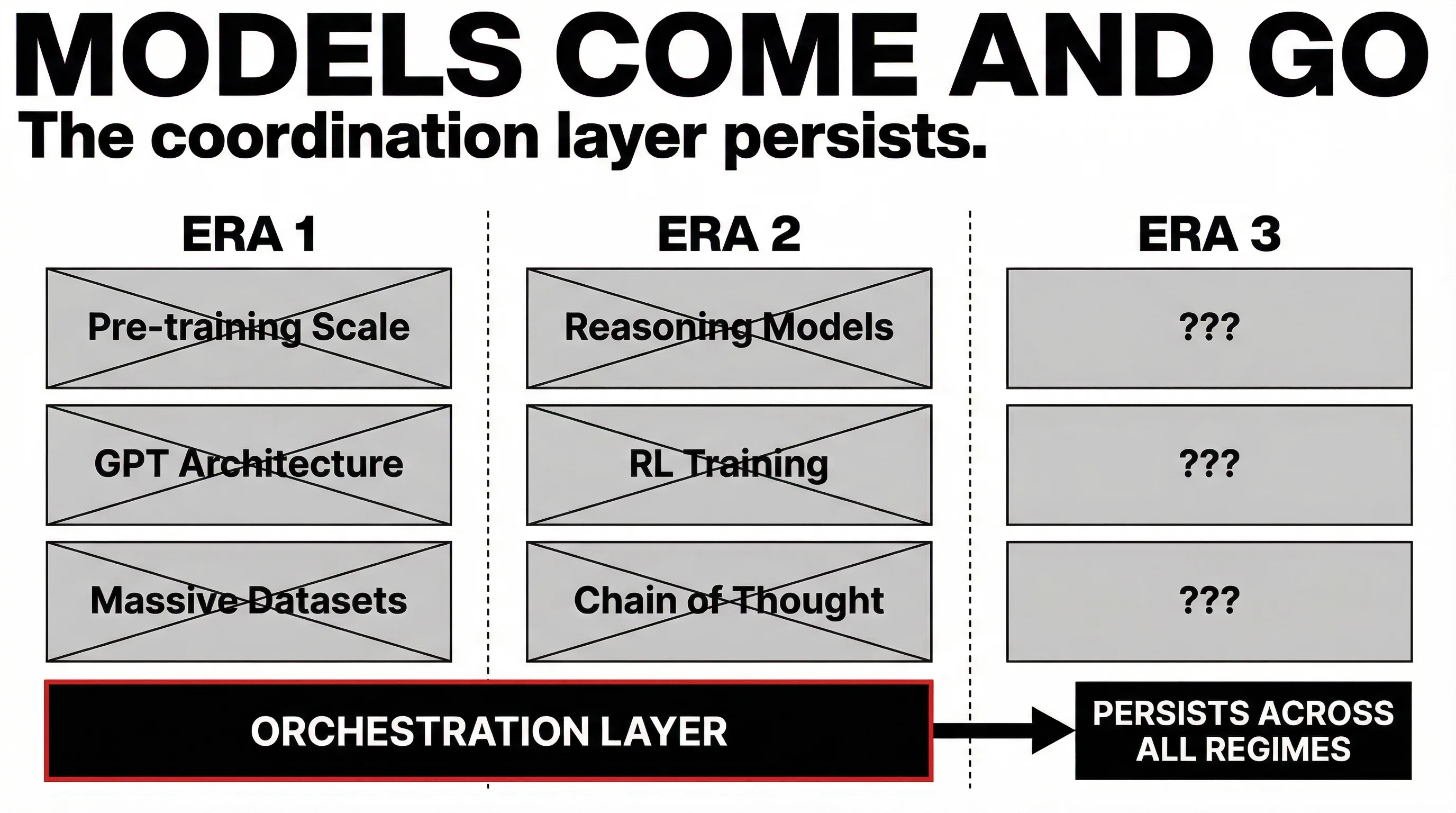

"If you look at where we've been — it was all about pre-training scale. Then it was about post-training. Then we came up with reasoning. Then we said, 'Oh, there's RL.' And so there's constant innovation that's happening even in what is considered transformers and transformer architectures."

Count the shifts. Pre-training scale. Post-training. Reasoning. Reinforcement learning. Four paradigm changes in three years. Each one rewrote the rules. Each one made the previous moat irrelevant.

And the companies that didn't see the shift coming? They didn't get a warning. They just woke up behind.

Nadella isn't alone in this assessment. Google DeepMind CEO Demis Hassabis has been saying something remarkably similar: AI requires "one or two" additional major breakthroughs beyond scaling alone — potentially in areas like reasoning, memory, and what he calls "world model" ideas. The two most powerful figures in AI are both saying the same thing: the current era is not the final one.

We saw a preview of this in January 2025, when DeepSeek demonstrated that a model trained for a fraction of the cost could match or exceed models built on billions of dollars of infrastructure. One team. One insight. The entire industry scrambled.

That wasn't an anomaly. That was a preview.

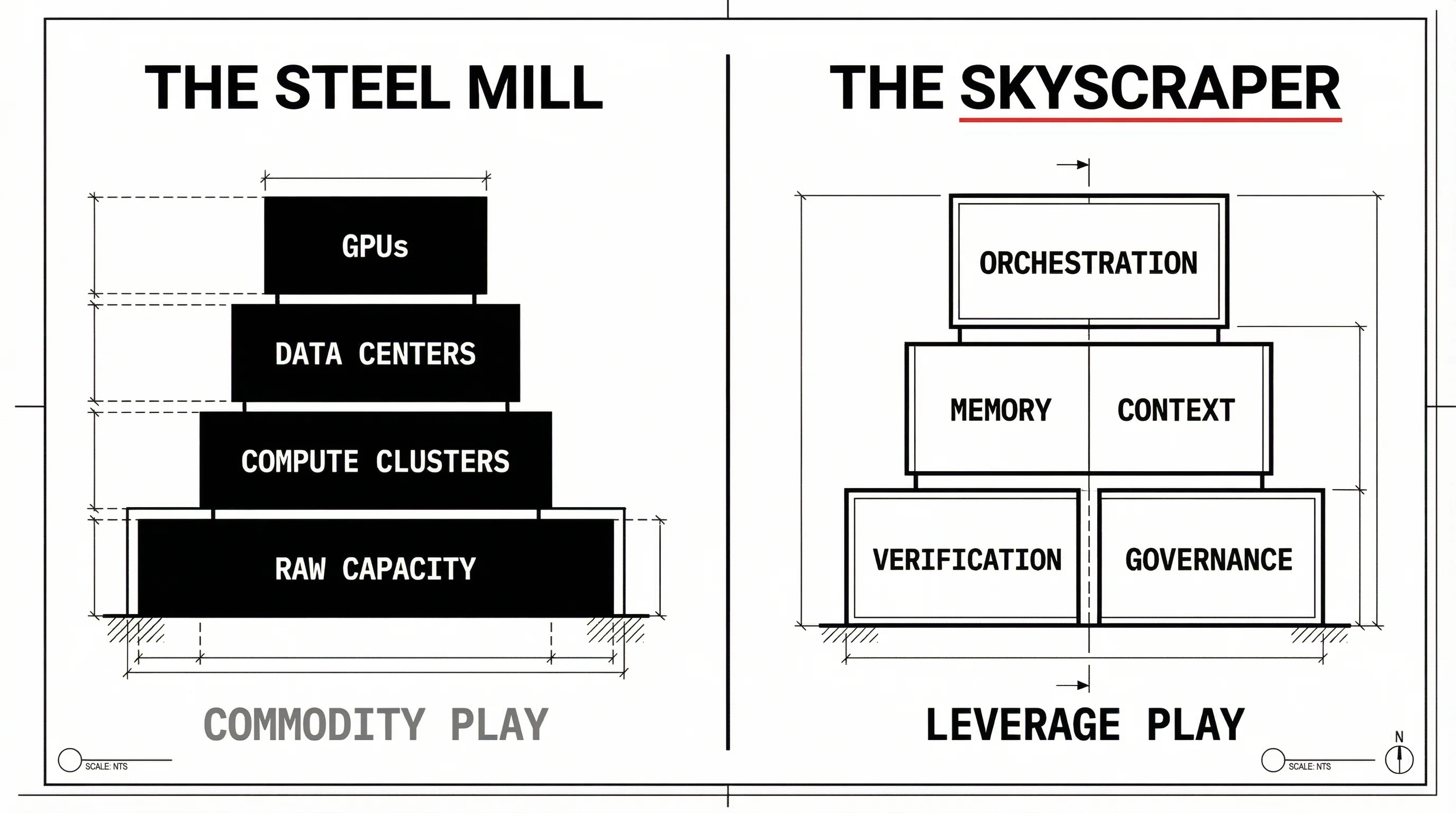

The Steel Mill Fallacy

Here's what the $700 billion data center crowd is missing.

They're building steel mills. And steel mills are necessary. Thank God someone is building them. We need the compute. The world needs the raw capacity. I'm glad it exists.

But the leverage was never in the steel.

During the Industrial Revolution, the companies that poured steel made money. Good money. Andrew Carnegie became one of the richest men in history. But the companies that figured out what to do with the steel — the railroads that connected a continent, the skyscrapers that built Manhattan, the automobiles that changed how humans lived — they didn't just make money. They built empires. They changed civilization. They captured the value that the steel mills created but couldn't keep.

The steel was the commodity. The application was the leverage.

We're watching the same pattern unfold right now. The data centers are the steel mills of the AI era. Necessary. Capital-intensive. Impressive. And ultimately — a commodity play. A margin compression play. A race to the bottom where the biggest balance sheet wins and everyone else gets squeezed.

That's not leverage. That's a resource war with diminishing returns.

Where the Leverage Actually Lives

The real opportunity isn't in the compute. It's in what sits on top of the compute.

The orchestration layer.

The layer that takes raw AI capability — whatever model, whatever architecture, whatever paradigm shift comes next — and turns it into something that actually works. That coordinates. That remembers. That enforces rules. That builds autonomous organizations.

This is what most people miss. They look at the AI landscape and see a compute race. They see NVIDIA's market cap and assume the value accrues to the infrastructure.

It doesn't. It never has. Not in any technology revolution in history.

The value accrues to the layer that makes the infrastructure useful.

Think about it. AWS didn't build the internet. It built the layer that made the internet useful for businesses. Salesforce didn't build databases. It built the layer that made databases useful for sales teams. The infrastructure providers made money. The orchestration layers built empires.

The Regime Changes. The Orchestration Layer Persists.

This is the part Nadella understands but can't say directly — because he's invested in the current regime.

When the next model architecture lands — the one he describes as potentially "more efficient in its performance" — everything built for the current paradigm becomes a monument to the wrong bet. The $200 billion data centers. The hoarded GPUs. The multi-decade infrastructure contracts.

Everything except the orchestration layer.

Because the orchestration layer doesn't care which model wins. It doesn't care if it's GPT-7 or Claude 5 or something that hasn't been invented yet. It doesn't care if the architecture is transformers or state-space models or something we don't have a name for.

The orchestration layer coordinates whatever exists. It provides the memory, the context, the protocols, the verification, the execution framework that turns raw intelligence into organized work.

"Models come and go. Architectures rise and fall. The coordination layer persists."

That's not a feature. That's the only durable position in the entire stack.

The Equation, Not the Data Center

A viral thread analyzing Nadella's comments — now with over 500,000 views — ended with a line worth sitting with:

"The most dangerous competitor in this race doesn't have a data center. They just have the equation."

I'd push that further.

The most valuable company in AI won't be the one with the most GPUs. It'll be the one that figured out how to make any GPU useful. Not through brute force. Through coordination. Through leverage. Through the operating system that turns raw compute into autonomous organizations.

We don't compete with the data centers. We're what makes the data centers worth building.

The Bet

Everyone else is betting on scale. We're betting on leverage.

Everyone else is building for the current paradigm. We're building for every paradigm that comes after it.

Everyone else is pouring steel. We're designing the skyscraper.

The regime will change. Nadella just told you it will. Hassabis agrees. DeepSeek proved it's possible. The question isn't whether — it's when. And when it does, the only thing that survives is the layer that was never dependent on the regime in the first place.

That's what we're building.

Sources

- Satya Nadella on OMR Podcast — "The $1 Billion OpenAI Bet, Microsoft's Future & The AI Revolution," March 2026

- Fortune — "Big Tech's $630 billion AI spree now rivals Sweden's economy," February 6, 2026

- CNBC — "Tech AI spending approaches $700 billion in 2026," February 6, 2026

- Reuters — "Big Tech to invest about $650 billion in AI in 2026, Bridgewater says," February 23, 2026

- Fortune — "Nvidia's Jensen Huang says tech's $700 billion AI capex is just the start," February 25, 2026

- Fortune / Moody's — "Moody's flags $662 billion risk at the heart of the data center build," February 25, 2026

- Times of India — "Microsoft CEO Satya Nadella may have just agreed with Google DeepMind CEO Demis Hassabis," March 3, 2026

- @r0ck3t23 — Viral analysis thread on Nadella's comments, March 2, 2026 (512K+ views)